Last month we covered FDMEE and Essbase (BSO) calculations. This month, let’s take a look at FDMEE integration with ASO. With BSO, we set up calculation scripts to do a clear before the data load and an aggregation after the load. With ASO, there are no calculation scripts so we can’t use the same functionality.

Partial clears in ASO can be done using MaxL, the Essbase scripting language. Those of you familiar with Essbase already know, an aggregation is not needed after the a data load to ASO as those cubes dynamically aggregate.

There are several ways to accomplish these clears, most of them revolve around using Event Scripts in FDMEE. Event Scripts are written in Jython or Visual Basic. Jython is an implementation of the Python programming language, designed to run on the Java platform. Jython is a more powerful language than VBA and it’s fairly easy to learn and work with, so that is what I use when writing Event Scripts.

We have many intervals in the FDMEE integration process where we can insert custom code into an Event Script. Each FDMEE process has a before and after script that can be customized. Since we want data to remain in our Essbase ASO application for as long as possible, we will use the BefLoad script to run our clear process.

It’s possible to call a batch file that will execute your MaxL script to run the clear, but I like to call MaxL directly from Jython. This method requires that the Essbase Client is installed on your FDMEE server so that the startMaxl.cmd script is available. Of course, we should be using encrypted MaxL scripts so that our passwords are not visible in either the Jython BefLoad.py script or in our MaxL script.

In this hypothetical situation, our Club Electronics stores in Delaware have submitted their ATM Sales. Lets say that Club Electronics submits a new file each day to update our ASOSamp application with the month-to-date sales numbers. To make sure that we are loading the correct data each time, we need to clear the existing Delaware ATM sales for Club Electronics for the current month and current year.

This scenario could be accomplished by hard coding values in for Delaware in MaxL, but we have other states that submit similar files using different locations in FDMEE. In order to make our clear script usable by multiple stores and entities, we can pass variables using Jython to MaxL to dynamically clear portions of our ASO cube based on the location (or data load rule, or integration option set in FDMEE, or many other variables).

So, let’s begin in FDMEE with our file integration using ASOSamp as our Target Application. I have already set up ASOSamp as a Target Application, created our Import Format for a comma delimited file, created our Location for Club Electronics ATM Sales (CE_ATM_Sales), and created the Data Load Rule to load this data.

MaxL Script

Our MaxL script accepts three parameters: Geography, Month, and Stores. We have our MaxL encrypted so that no passwords are stored in plain text. The trick to getting this to work, I have found, is using double quotes around the MDX expression in the MaxL statement. This allows MaxL to properly evaluate the variables. You can hard code some or all of the MDX tuple, I did a little of both here.

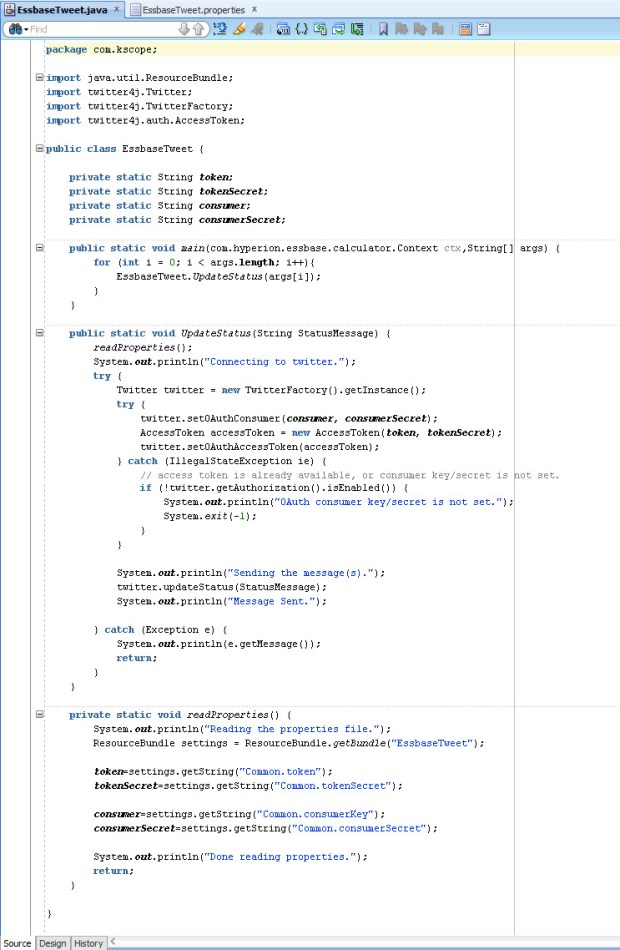

Jython BefLoad.py Script

In the BefLoad script, we need to test and make sure that which FDMEE Location is being loaded to ensure we are running the proper code. This can test can also be done at the Load Rule level, if you have multiple rules in the same location. Next, the script calls startMaxl.cmd which is installed as part of the Essbase Client installation and passes the variables to the MaxL script.

Passing Variables

The trick to getting all of this to work is the ability to pass variables; either dynamic variables that come from FDMEE (Location name, POV month, etc.) or static variables that we have coded into the Jython script. In the example above, I show how to pass a variable with a space from Jython to MaxL. By escaping the double quote (“) with a backslash (\), we are able to pass the variable from Jython to the Windows Command prompt surrounded in double quotes (“). Without the escape character, the variable will not get passed correctly.

Logs

In our FDMEE process logs, we can see that the code is running properly thanks to the fdmAPI.logInfo lines we added to the BefLoad script:

2016-07-22 17:53:11,871 INFO [AIF]: Executing the following script: C:\FDMEE/data/scripts/event/BefLoad.py

2016-07-22 17:53:11,923 INFO [AIF]: ======================================================================

2016-07-22 17:53:11,923 INFO [AIF]: BefLoad Script: Begin

2016-07-22 17:53:11,923 INFO [AIF]: ======================================================================

2016-07-22 17:53:11,923 INFO [AIF]: ======================================================================

2016-07-22 17:53:11,923 INFO [AIF]: Submitting MaxL to selectively clear ASOSamp

2016-07-22 17:53:11,923 INFO [AIF]: ======================================================================

2016-07-22 17:53:13,141 INFO [AIF]: ======================================================================

2016-07-22 17:53:13,141 INFO [AIF]: MaxL commands to ASOSamp were successful

2016-07-22 17:53:13,141 INFO [AIF]: ======================================================================

2016-07-22 17:53:13,141 INFO [AIF]: ======================================================================

2016-07-22 17:53:13,141 INFO [AIF]: BefLoad Script: END

2016-07-22 17:53:13,141 INFO [AIF]: ======================================================================

2016-07-22 17:53:14,825 INFO [AIF]: EssbaseService.loadData - START

In Essbase, we can also verify that the MaxL code is executing properly by checking the ASOSamp application log:

[Fri Jul 22 17:53:12 2016]Local/ASOsamp///6600/Info(1013210)

User [admin@Native Directory] set active on database [Sample]

[Fri Jul 22 17:53:12 2016]Local/ASOsamp///6544/Info(1042059)

Connected from [::1]

[Fri Jul 22 17:53:12 2016]Local/ASOsamp/Sample/admin@Native Directory/6544/Info(1013091)

Received Command [AsoClearRegion] from user [admin@Native Directory]

[Fri Jul 22 17:53:13 2016]Local/ASOsamp/Sample/admin@Native Directory/6544/Info(1270602)

Removed [25] cells from input view. Partial Data Clear Elapsed Time [0.258334] sec

[Fri Jul 22 17:53:13 2016]Local/ASOsamp/Sample/admin@Native Directory/6544/Info(1013273)

Database ASOSamp.Sample altered

[Fri Jul 22 17:53:13 2016]Local/ASOsamp///7080/Info(1013214)

Clear Active on User [admin@Native Directory] Instance [1]

With the ability to call MaxL directly as part of FDMEE scripts, your integration is only limited by your imagination. To take this post another step further, you might decide to update substitution variables in Essbase based on the FDMEE POV that is being loaded or maybe even build aggregate views using MaxL in the AftLoad.py script without much additional effort.

With these new offerings added to their stable, is this the death of on-premises EPM as we know it?

With these new offerings added to their stable, is this the death of on-premises EPM as we know it?